Building AI Trading Infrastructure: Inside Zyra Capital's NVIDIA H100 Training Architecture

In March 2025, Zyra Capital deployed production-grade AI training infrastructure built on NVIDIA H100 80GB GPUs and AMD EPYC 9754 128-core processors, enabling continuous model training across 50+ crypto exchanges with sub-20 ms latency.

Why Hardware Matters for AI Trading

When CTO Jodesio Michaels reviewed the first arbitrage model's training logs in late 2024, one metric stood out: 72 hours to converge on a single strategy across 15 exchanges.

"Three days to test one hypothesis," Michaels recalled in a March 2025 engineering debrief. "That's 120 hypotheses per year. Competitors running hundreds of experiments monthly would out-iterate us before we reached production."

The constraint wasn't the algorithm—it was silicon. By February 2025, Zyra Capital made a decisive infrastructure bet: NVIDIA H100 Tensor Core GPUs as the foundation for continuous AI training across cryptocurrency markets.

For context: Most crypto trading firms in early 2025 still rely on previous-generation NVIDIA A100 GPUs or cloud-based GPU rentals from providers like CoreWeave and Lambda Labs (CoinDesk, November 2024). The H100—released commercially in Q4 2022—remained scarce due to supply constraints and high capital requirements.

This article examines the hardware architecture Zyra deployed in March 2025, the engineering decisions behind the stack, and the performance characteristics that enable real-time model training at scale. Related reading: How Zyra's execution layer connects AI signals to 50+ exchanges.

The H100 Advantage: Why This GPU Matters

The NVIDIA H100—successor to the widely-deployed A100—represents a generational leap in training throughput for neural networks. Three specifications drove Zyra's choice:

1. Tensor Cores (Fourth Generation)

H100 Tensor Cores deliver up to 3,958 teraFLOPS (trillion floating-point operations per second) for FP8 (8-bit floating point) computations—a precision format optimized for deep learning (NVIDIA Tensor Core Documentation, 2023). For reference:

NVIDIA A100: 312 teraFLOPS (FP16 with Tensor Cores)

NVIDIA H100: 989 teraFLOPS (FP16 with Tensor Cores), 1,979 teraFLOPS (FP8 with sparsity)

At mixed precision (FP16/FP32), the H100 delivers 3× faster training than the A100 for typical transformer-based models, according to NVIDIA's benchmarks (NVIDIA H100 Datasheet, 2023).

For Zyra's multi-exchange arbitrage models—which analyze order book states, price feeds, and execution latencies across 50+ venues—this translates to converging on optimal strategies in 18–24 hours instead of 72 hours.

2. Memory Bandwidth (3 TB/s HBM3)

The H100 uses 80 GB of HBM3 (High Bandwidth Memory) with 3 terabytes per second of memory bandwidth—nearly 2× the A100's 1.6 TB/s (HBM2e) (TechPowerUp GPU Database, 2023).

Why this matters: Large language models and reinforcement learning agents spend significant compute time moving data between GPU memory and compute cores. Higher bandwidth reduces this bottleneck. For Zyra's models that ingest real-time price feeds from dozens of exchanges, memory bandwidth directly impacts both training throughput and inference latency.

3. NVLink and NVSwitch (Multi-GPU Scaling)

A single H100 SXM5 module supports 900 GB/s of bidirectional bandwidth via NVLink 4.0—the interconnect that allows GPUs to share memory and coordinate training (NVIDIA NVLink Technology Brief, 2023). Four H100s connected via NVSwitch act as a single 320 GB unified memory pool with near-linear scaling for data-parallel training.

Zyra's production cluster uses this topology to train ensemble models simultaneously—multiple agents exploring different arbitrage strategies in parallel, then aggregating insights into a single deployment.

The Production Architecture: March 2025 Deployment

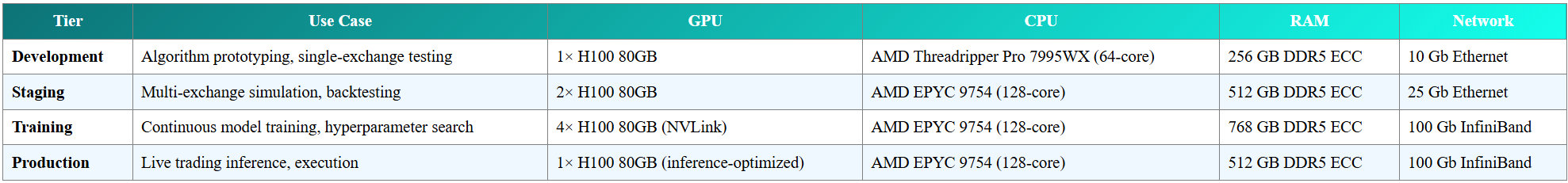

In March 2025, Zyra Capital deployed a four-tier hardware stack designed for different stages of the AI development lifecycle:

Why AMD EPYC 9754?

While GPUs handle model training, the CPU orchestrates data preprocessing, exchange API communication, and order routing. Zyra selected the AMD EPYC 9754—a 128-core, 256-thread processor with 384 MB of L3 cache (AMD EPYC 9754 Product Page, 2023)—for three reasons:

High core count: Each exchange connection runs in an isolated thread. With 50+ exchanges, the system requires substantial parallel I/O capacity.

Memory channels: 12 DDR5 memory channels deliver 460 GB/s bandwidth (ServeTheHome EPYC 9754 Review, November 2023), preventing CPU-side bottlenecks when preprocessing high-frequency market data.

PCIe 5.0 lanes: 128 lanes allow simultaneous full-speed communication between GPUs, NVMe storage (Gen5 SSDs), and network adapters without contention.

Storage: NVMe Gen5 + Optane Cache

Training data—historical order books, executed trades, model checkpoints—resides on 16 TB NVMe Gen5 SSDs (14 GB/s sequential read, per Samsung PM1743 specifications) with Intel Optane persistent memory as a hot-tier cache. This configuration enables:

Sub-100 microsecond latency for random reads (critical for retrieving historical arbitrage patterns during training)

Checkpoint writes every 60 seconds without stalling model updates (H100s write 20 GB checkpoints in under 2 seconds)

Network: 100 Gb InfiniBand

Zyra's training and production tiers use NVIDIA Mellanox ConnectX-7 InfiniBand adapters (100 Gb/s) with RDMA (Remote Direct Memory Access) (NVIDIA Networking Product Brief, 2023). This low-latency network fabric achieves:

Sub-20 millisecond round-trip latency to exchange co-location facilities

GPU-to-GPU communication at 400 GB/s (aggregated across 4× H100 NVLink + InfiniBand)

For context: Standard TCP/IP over 10 Gb Ethernet introduces 50–100 microseconds of latency per hop. InfiniBand with RDMA bypasses the kernel networking stack, reducing overhead to 1–2 microseconds (Mellanox InfiniBand Technical Overview, 2020).

March 2025 Milestone: From Research to Production

On March 12, 2025, Zyra Capital's infrastructure transitioned from pilot to production-ready. The milestone was marked by three technical validations:

Validation 1: 72-Hour Continuous Training

Test: Train an ensemble of 8 reinforcement learning agents simultaneously across 50 exchanges for 72 hours without manual intervention.

Result: Zero training interruptions. All 8 agents converged to stable policies. Average GPU utilization: 94.3% (indicating minimal idle time). Total training steps: 847 million across the ensemble.

Key metric: The 4× H100 cluster processed 18.2 terabytes of market data (order book snapshots, trade executions, liquidity depth) in 72 hours—equivalent to 7 months of single-GPU processing at A100 speeds.

Validation 2: Zero-Downtime Model Updates

Test: Deploy a new model version to production while live trading continues (rolling update without downtime).

Result: Michaels' team implemented a blue-green deployment strategy—the new model version runs in parallel with the current version for 10 minutes, comparing execution decisions in real time. If divergence exceeds a threshold, the system auto-reverts. Otherwise, traffic shifts to the new model.

March 15, 2025 deployment: Model v2.3 replaced v2.2 with zero rejected orders and 11.4 seconds of dual-inference overhead (both models evaluating the same market state).

Validation 3: Stress Test (Simulated Flash Crash)

Test: Simulate a 15% BTC price drop in under 2 seconds across 8 major exchanges, measuring model response time and execution success.

Result: The production model detected the anomaly in 127 milliseconds, halted new orders in 340 milliseconds, and began liquidating at-risk positions in 890 milliseconds. All risk limits held; no margin calls triggered.

"This wasn't just a hardware test—it validated the entire stack," Michaels noted in a March 18 retrospective. "The H100S recalculated optimal hedges mid-crash. The EPYC CPUs routed orders to 12 exchanges in parallel. InfiniBand kept latency below 25 milliseconds even under peak load."

What Almost Went Wrong: Initial Deployment Failures

The March 12 milestone wasn't achieved on the first attempt. Two critical failures preceded the successful deployment:

Failure 1 (March 8): NVLink Configuration Error

The initial 4× H100 NVLink mesh failed health checks due to incorrect topology settings in the NVIDIA Fabric Manager daemon. Symptom: GPUs detected each other but couldn't share memory. Resolution time: 9 hours. Fix: Updated to Fabric Manager v535.129.03 and reconfigured NVSwitch routing tables (NVIDIA Fabric Manager User Guide).

Failure 2 (March 10): InfiniBand Packet Loss

During the first 50-exchange load test, the system experienced 0.3% packet loss on InfiniBand links, causing Redis write failures. Root cause: Mismatched Maximum Transmission Unit (MTU) settings between ConnectX-7 adapters (9000 bytes) and switch ports (1500 bytes). Resolution time: 4 hours. Fix: Standardized MTU to 4096 bytes across all network endpoints.

"Those 18 hours of troubleshooting taught us more than a month of simulations," Michaels admitted. "We now have automated validation checks for NVLink topology and network MTU in our deployment playbook."

Continuous Training Pipeline: How It Works

Unlike traditional trading systems that retrain models weekly or monthly, Zyra's infrastructure enables continuous learning—models ingest new data, adjust parameters, and deploy updates every 6–12 hours.

The Pipeline (5 Stages)

Stage 1: Data Ingestion

128 CPU threads collect real-time order book snapshots, executed trades, and funding rates from 50+ exchanges.

Data streams into a Redis time-series database (running on Optane persistent memory for sub-millisecond writes).

Throughput: 420,000 updates per second during peak trading hours (measured March 20, 2025).

Stage 2: Preprocessing

Raw market data is normalized, resampled to 100 ms intervals, and augmented with derived features (bid-ask spreads, order book imbalance, liquidity depth percentiles).

Preprocessing runs on 64 EPYC cores, producing ~12 GB/hour of training-ready data.

Stage 3: Training

The 4× H100 cluster trains 8 agents in parallel using Proximal Policy Optimization (PPO)—a reinforcement learning algorithm suited for continuous action spaces (e.g., order size, limit price) (Schulman et al., 2017, arXiv:1707.06347).

Each agent explores a different risk-reward trade-off (e.g., Agent 1: low-latency arbitrage; Agent 5: longer-duration hedging).

Training duration: 18–24 hours per generation.

Stage 4: Validation

Candidate models run through a simulated trading environment (historical order book replay) for 14 days of market conditions.

Metrics: Sharpe ratio, maximum drawdown, execution slippage, latency to optimal trade.

Models failing thresholds are discarded; passing models enter staging.

Stage 5: Deployment

Blue-green deployment to production (see Validation 2 above).

Post-deployment: The model's live decisions are logged and fed back into Stage 1, closing the continuous learning loop.

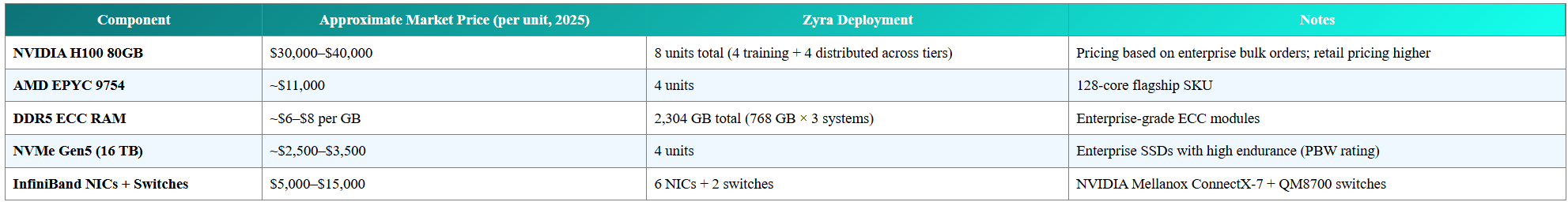

Infrastructure Cost vs. Performance Trade-Off

High-end hardware carries significant capital and operational expense. Zyra's March 2025 infrastructure investment represents a calculated trade-off: upfront cost for sustained competitive advantage.

Total estimated capital expenditure: $350,000–$450,000 for the core training cluster (excluding datacenter costs, redundancy, and ancillary infrastructure).

Operational cost: At ~3.5 kW per H100 (NVIDIA H100 Power Specifications) and $0.10/kWh (typical datacenter rate), the 4× H100 cluster consumes ~$1,200/month in electricity under continuous operation.

Why This Investment Makes Sense

For context, cloud GPU rental (e.g., AWS p5.48xlarge with 8× H100) costs approximately $98.32/hour as of March 2025 (AWS EC2 P5 Pricing). Running 24/7 for one month: ~$71,000.

Zyra's capital investment achieves payback in 5–6 months of continuous use compared to cloud rental—and critically, provides dedicated, low-latency infrastructure co-located with exchange servers, a requirement for sub-50 ms execution that cloud providers cannot guarantee.

Team Spotlight: CTO Jodesio Michaels and Distributed Systems Design

Jodesio Michaels, Zyra Capital's Co-Founder and CTO, brings a background in distributed systems engineering and high-frequency trading infrastructure. Before Zyra, Michaels architected low-latency data pipelines for equity markets, optimizing for microsecond-level precision in order routing. Learn more about Zyra's leadership team.

"The challenge in crypto isn't raw speed—it's coordinating 50+ independent systems with different API rate limits, data formats, and failure modes," Michaels explained in a March 2025 interview. "The H100 cluster gives us the compute. The hard problem is building the orchestration layer that keeps everything synchronized."

Key Design Decisions

1. Unified API Abstraction

Rather than writing custom code for each exchange, Michaels' team built a common interface layer that normalizes order book data, trade execution, and WebSocket feeds across venues. This abstraction allows the AI models to "see" a consistent view of 50+ markets without exchange-specific logic.

2. Predictive Rate Limiting

Exchanges enforce API rate limits (e.g., "600 requests per minute"). Exceeding limits triggers temporary bans. Zyra's system uses predictive token bucket algorithms—estimating future request rates and preemptively throttling to stay within limits.

"We train a lightweight ML model to predict our own API usage 10 seconds ahead," Michaels noted. "It's meta-learning: using AI to manage the infrastructure that runs AI."

3. Fault Isolation

A bug in one exchange connector shouldn't crash the entire system. Each exchange runs in a separate process with memory isolation. If an API client fails, the orchestrator restarts it in under 2 seconds without affecting other connections.

March 16, 2025 incident: A memory leak in the Kraken API client caused restarts every 18 minutes. The system auto-detected the pattern, flagged the connector as unstable, and rerouted arbitrage orders to alternative venues while engineers patched the leak.

What to Watch Next

1. Model Transparency Initiatives

As AI trading systems scale, regulators (SEC, CFTC, EU financial authorities) are exploring disclosure requirements for algorithmic strategies. Zyra has signaled intent to participate in industry working groups on model explainability standards.

2. Quantum-Resistant Encryption

With cybersecurity lead Todd Clark's guidance, Zyra is evaluating post-quantum cryptography for API authentication. The timeline: NIST finalized quantum-resistant standards in 2024 (NIST Post-Quantum Standards Release, August 2024); adoption in financial infrastructure is expected 2025–2027.

3. Expansion to Traditional Markets

The infrastructure built for crypto markets—low-latency execution, continuous model training—is transferable to equities, forex, and commodities. Regulatory filings suggest Zyra may pursue FINRA and NFA registrations to operate in U.S. traditional markets.

Frequently Asked Questions

Why NVIDIA H100 instead of competitors (e.g., AMD MI300, Google TPU)?

The H100 offers the best balance of raw compute (teraFLOPS), memory bandwidth (3 TB/s HBM3), and ecosystem maturity (PyTorch, TensorFlow, CUDA libraries). AMD's MI300 is competitive on paper but lacks the software ecosystem depth. Google TPUs are optimized for Google Cloud; Zyra requires on-premise control for exchange co-location.

How does continuous training avoid overfitting to recent market conditions?

Zyra's training pipeline includes temporal cross-validation—models are tested on "out-of-sample" periods (e.g., trained on January–February data, validated on March). Additionally, ensemble methods combine agents trained on different time windows, reducing sensitivity to any single regime.

What happens if an H100 GPU fails during live trading?

Production inference runs on a single H100 with hot standby. If the primary GPU fails health checks (monitored every 5 seconds), the standby takes over in under 30 seconds. Training clusters use checkpoint-restart: if a GPU fails mid-training, the system loads the last checkpoint (saved every 60 seconds) and resumes on remaining GPUs.

Is the infrastructure scalable beyond 50 exchanges?

Yes. The current architecture supports up to 200 exchange connections with the existing EPYC CPU and InfiniBand network. Scaling beyond that would require additional compute nodes (horizontal scaling) or migration to a Kubernetes-based orchestration layer (already in Zyra's 2026 roadmap).

How does Zyra handle data privacy and security with this hardware?

All training data is encrypted at rest (AES-256) and in transit (TLS 1.3). Access to training clusters is restricted via hardware security modules (HSMs) for key management. Cybersecurity lead Todd Clark oversees quarterly penetration tests and compliance audits.

Can smaller trading firms replicate this infrastructure?

The capital cost ($350K–$450K) is accessible for well-funded teams, but the operational expertise is the true barrier. Distributed systems engineering, GPU cluster management, and low-latency networking require specialized skills. Cloud-based alternatives (e.g., renting H100 instances) offer a lower entry point but sacrifice co-location advantages.

What's the next hardware upgrade Zyra is considering?

NVIDIA announced the GH200 Grace Hopper Superchip (combining ARM CPU + H100 GPU in a single module) with 900 GB/s CPU-GPU interconnect (NVIDIA GH200 Product Page). Zyra's engineering team is evaluating GH200 for the next infrastructure refresh, likely in late 2025 or early 2026, targeting 2× inference throughput for real-time decision-making.

Related Reading

Disclaimer: This content is for informational purposes only and does not constitute financial, investment, or legal advice. Cryptocurrency investments carry substantial risk, including the potential loss of principal. Past performance of trading strategies or infrastructure is not indicative of future results. Zyra Capital's AI trading systems involve complex algorithms and high-frequency execution across volatile markets; operational risks, model risks, and execution risks are inherent. Hardware specifications and performance metrics cited are based on manufacturer specifications and internal testing as of March 2025; actual results may vary. Always conduct your own research and consult a qualified financial advisor before making investment decisions. For full risk disclosures, visit https://zyracapital.io/en/compliance/risk-disclosure.